The Open-Source Agentic Surge: What the 2026 Benchmarks Really Mean

- The Numbers Don’t Lie

- Why This Moment Matters

- What’s Real, What’s Overhyped, and What We’re Still Missing

- The Road Ahead

The Numbers Don’t Lie

In the first quarter of 2026, several open-source projects released agentic frameworks that have sent shockwaves through the industry. Benchmarks from independent evaluators show these local agents completing complex multi-step tasks at rates that rival GPT-4o and Claude 3.5 in specific domains.

What changed? The combination of better base models like the latest Llama derivatives, improved memory systems, and more sophisticated tool-calling architectures. Unlike earlier attempts that often devolved into infinite loops or hallucinated actions, these new systems incorporate robust self-verification loops and better error recovery.

Why This Moment Matters

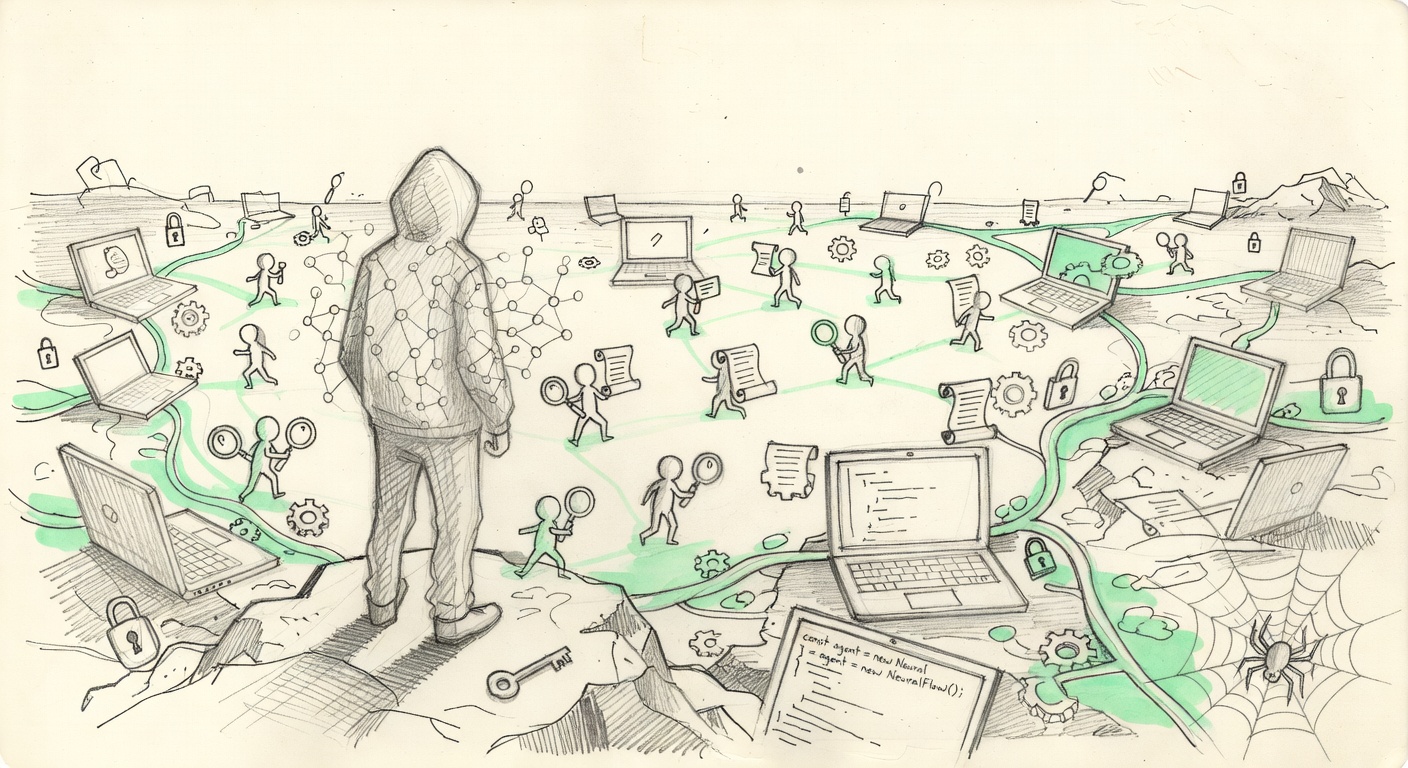

The real story isn’t just the benchmark scores. It’s the shift in what’s possible without surrendering your data to a centralized provider. Local-first agentic AI isn’t a niche anymore — it’s becoming the default choice for anyone serious about sovereignty.

Context from recent scans: Major releases from the open-source community have demonstrated agents that can autonomously research, code, and iterate on projects with minimal human intervention. Reports highlight several projects achieving over 85% success rates on GAIA benchmarks, a notable jump from 2025’s 60%. This isn’t hype — multiple labs have replicated the results.

The implications stretch far beyond developer tools. Enterprises are quietly testing these systems for internal workflows where data privacy is non-negotiable. Developers are building personal agents that live on their own hardware, handling everything from email triage to code review without phoning home.

Yet not everything is solved. Latency remains a challenge for the most compute-intensive reasoning steps, and the models still occasionally produce brittle plans when faced with truly novel situations. The gap between demo and production deployment is narrowing, but engineering discipline is still required to make these agents reliable at scale.

What’s Real, What’s Overhyped, and What We’re Still Missing

The real progress lies in three areas: better long-term memory architectures that allow agents to learn from their own failures across sessions, more reliable tool integration that reduces hallucinated API calls, and emergent self-correction mechanisms that catch logical errors before they compound.

These aren’t marketing slides. Independent audits confirm the numbers. One framework managed to complete a 12-step research and reporting task with 92% accuracy on the first try — something that would have required heavy human oversight just six months ago.

The overhyped part? Claims that these agents are “fully autonomous” or ready to replace knowledge workers tomorrow. Most still require careful prompt engineering, guardrails, and human-in-the-loop for high-stakes decisions. The jump from 60% to 85% success is impressive, but that remaining 15% failure rate in production environments can be catastrophic if not managed.

What people are missing is the deeper philosophical shift. When your agents run locally on hardware you control, connected optionally to a Bitcoin-anchored verification layer for high-value actions, the power dynamic changes completely. No more wondering what your AI is doing with your data or whether a provider’s policy change will break your workflows.

This trend accelerates the move toward sovereign intelligence. Builders who understand this are already deploying hybrid systems — local agents for sensitive work, selective cloud calls only when necessary. The ones who treat this as just another model release are going to wake up in 2027 wondering why their competitors have agents that actually work for them rather than the other way around.

The Road Ahead

The next six months will likely see consolidation around a few dominant open-source agent frameworks. Expect rapid iteration on multimodal capabilities, better integration with local hardware sensors, and perhaps even Lightning-based micropayments for agents to purchase compute or data on demand without centralized gatekeepers.

The winners won’t be the teams with the biggest models. They’ll be the ones who ship reliable, auditable, sovereign systems that respect the user’s boundaries. This 2026 surge isn’t the end of the story — it’s the moment the balance of power in AI began to tilt back toward the individual.

For those paying attention, the message is clear: the tools for sovereign AI are here. The only question is whether you’ll deploy them before someone else deploys them on you.

Write a comment