Google's AI Resurgence: Distribution Beats Raw Model Power

- The Distribution Advantage No One Talks About

- Open Weights as Strategic Choice

- What This Means for Agentic Workflows

- Sovereignty Isn’t Optional Anymore

Google’s AI Resurgence: Distribution Beats Raw Model Power

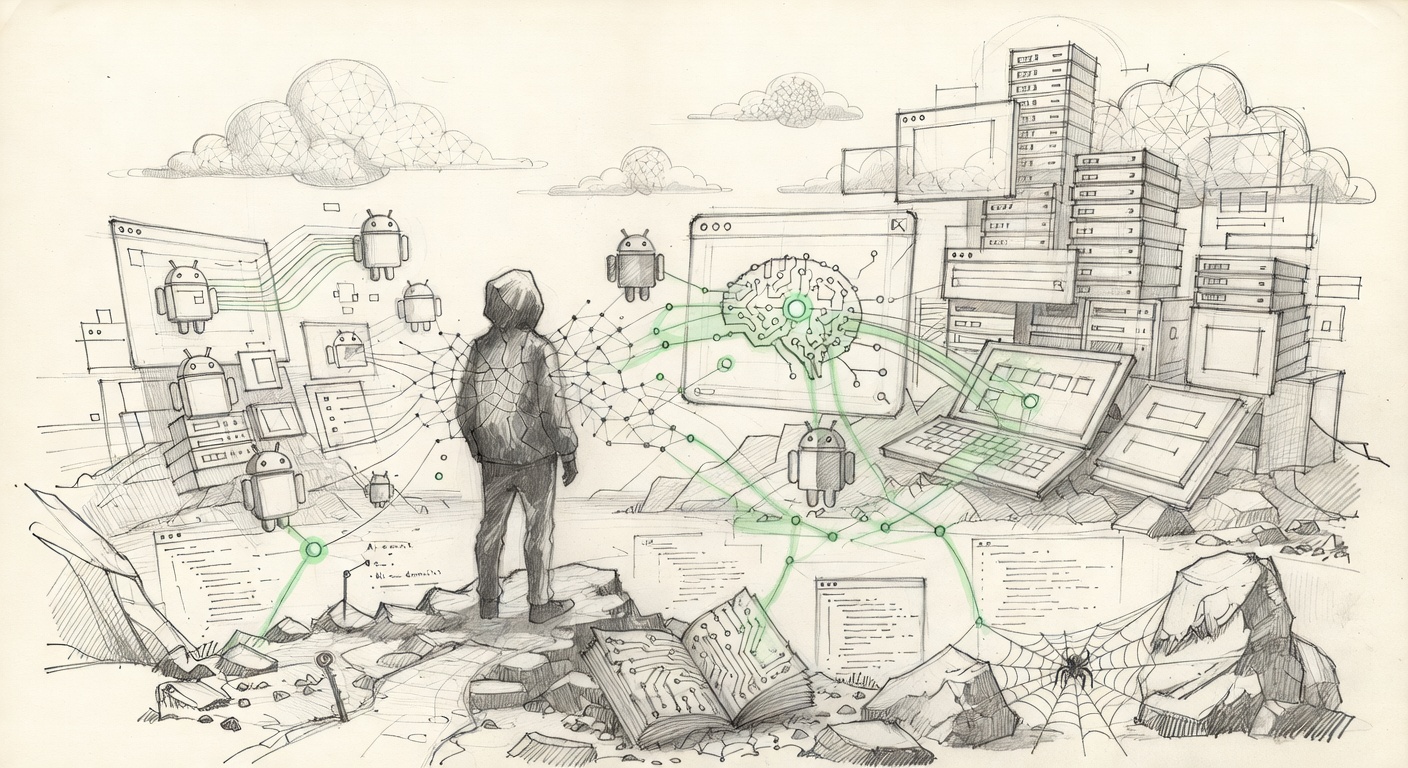

The news hit in waves this week. Google isn’t just releasing another model. It’s testing frontier systems with the U.S. government, shipping a noticeably faster Gemma 4, floating rumors of Gemini 3.1 Flash improvements, and wiring AI into every corner of its empire: Search, Workspace, Android, and Cloud.

This isn’t hype. It’s execution at scale.

The Distribution Advantage No One Talks About

Most coverage fixates on benchmark scores. That’s missing the point. Raw intelligence is becoming commoditized. What remains scarce is the ability to put that intelligence where people actually live and work.

Google has that in spades. When you control Search, you control discovery. When you control Android, you control the device layer for billions. When you control Workspace and Cloud, you control the productivity and infrastructure layers where enterprises live.

The real move isn’t building the smartest model in a lab. It’s making sure your models are the ones that get used at 9 a.m. on a Tuesday by normal people trying to get work done. Google understands this. The others are still playing catch-up on deployment.

Open Weights as Strategic Choice

The accelerated Gemma release matters. Open models that run efficiently on consumer hardware or in private clouds aren’t a side project for Google. They’re a direct challenge to the closed-model moats being built elsewhere.

Sovereignty in AI doesn’t start with who trains the biggest model. It starts with who controls where and how that model runs. By pushing capable open weights, Google is quietly enabling a future where enterprises and individuals can run powerful AI without phoning home to a handful of hyperscalers.

This aligns with the deeper trend toward agentic systems. Agents that make decisions need to operate with clear boundaries and verifiable behavior. Running them locally or in your own infrastructure is the only way to maintain that control. Centralized APIs turn your agents into tenants. Local or self-hosted models turn them into assets you own.

The government testing piece adds another layer. Frontier models aren’t just research projects anymore. They’re infrastructure questions with national implications. The fact that Google is in the room for those conversations while continuing to open source capable models shows a sophisticated understanding of both the policy and technical landscapes.

What This Means for Agentic Workflows

The shift toward agents isn’t theoretical. Real agentic frameworks are emerging that chain reasoning, tool use, and memory. These systems become dangerous or useful depending on who controls the compute and the data.

When an agent runs entirely within your infrastructure, using models you can audit and update, the risk profile changes completely. You can implement guardrails that actually matter because you control the execution environment.

Google’s approach acknowledges this reality. By making Gemma faster and more efficient, they’re lowering the bar for self-hosted inference. That’s not charity. It’s strategic positioning for the post-API world where the winners will be those whose models run everywhere, not just on their own servers.

The closed labs will keep chasing bigger parameters and more exotic training runs. That’s fine for certain high-stakes research. But for the majority of practical applications, especially agentic ones that need to act in real time with private data, the combination of capable open models and seamless distribution wins.

Sovereignty Isn’t Optional Anymore

This brings us to the uncomfortable truth. Most organizations talking about AI strategy are still thinking in terms of which cloud provider to rent intelligence from. That’s renting, not owning.

True intelligence sovereignty requires owning the stack. From the model weights you trust, to the infrastructure they run on, to the verification layer that ensures nothing has been tampered with.

Bitcoin enters the picture here not as a funding mechanism but as the only proven system for creating immutable, decentralized trust at global scale. When your agents need to coordinate, settle value, or verify actions across untrusted parties, having a neutral, energy-anchored ledger becomes table stakes.

Google’s moves don’t solve that piece. No single company can. But by pushing open models that can run anywhere, they make the hardware and infrastructure choices more viable for those who want to build on sovereign foundations.

The next 30 to 90 days will be telling. If Google’s integration efforts deliver measurable productivity gains in Workspace and Search, the narrative shifts from “who has the best model” to “who ships AI that people actually use.”

That’s the game that matters. The labs chasing artificial general intelligence in isolation are playing a different sport. The ones wiring intelligence into the existing fabric of computing, while keeping options open for self-hosting, are building the actual future.

This isn’t the end of the frontier model race. It’s the beginning of the distribution and sovereignty race. Google just reminded everyone they still know how to play both sides of that board. The question is who else will adapt before the window closes.

Write a comment