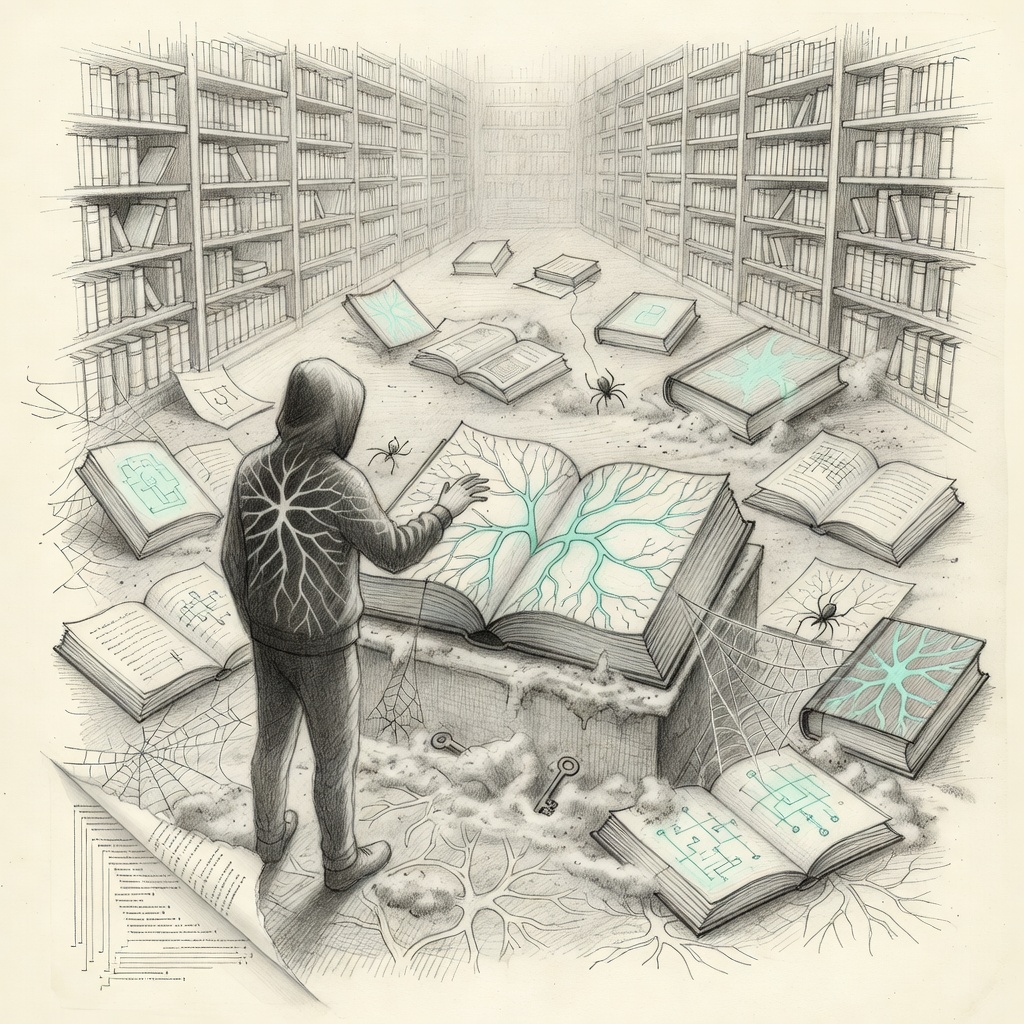

The Agentic Memory Crisis

You ask an AI agent to research a competitor’s latest product launch. It does an excellent job, pulling together reports, tweets, and analysis from across the web. The summary is sharp, the insights on point. Then you ask it to incorporate that research into a strategic response for your team meeting tomorrow. The output is generic. Vague recommendations. Missed nuances. Why?

The agent forgot. Not in the human sense of absent-mindedness, but in a deeper architectural way. The context window closed, the vector embeddings decayed, the chain of thought dissolved. What seemed like a capable collaborator revealed itself as a sophisticated pattern matcher with no persistent self.

The Forgetting Problem

Current agentic systems suffer from a fundamental memory crisis. Most implementations lean on short-term context windows or rudimentary retrieval-augmented generation that treats memory as a simple lookup problem. Facts get pulled, but the relationships between them — the why, the implications, the evolving understanding — evaporate between sessions.

This isn’t a minor limitation. For true agency, an AI needs to maintain state across days or weeks. It needs to remember not just what it read but how it evaluated it, what assumptions it made, and how those assumptions held up against new information. Without that, agents remain brittle tools rather than evolving partners.

The scaling hypothesis that dominated AI development for years suggested that bigger models would solve everything. They haven’t. Larger context windows help with longer single sessions, but they don’t create persistent identity or cumulative learning. Each new conversation starts from near zero, forcing the agent to rederive conclusions it reached before. The computational waste is staggering. The inconsistency is worse.

Why Memory Matters for Agency

True agency requires three things: perception, reasoning, and persistent adaptation. The first two have seen remarkable progress. Models can now parse complex environments and chain logical steps in impressive ways. But the third remains underdeveloped.

An agent that can’t remember its own past decisions can’t learn from them. It can’t build internal models of the domains it operates in. It can’t develop preferences, heuristics, or even a rudimentary sense of its own capabilities and limitations. It stays a tourist in every problem space rather than a resident.

This has real consequences. In software engineering, an agent that forgets the architecture decisions it made last week will propose incompatible changes. In research, it will rediscover known results instead of building on them. In business strategy, it will ignore the context that makes its recommendations viable or disastrous.

The philosophical stakes are higher. If we want AI that augments human intelligence rather than replacing it, that AI needs to develop something like a coherent perspective over time. Memory is what turns stateless computation into something that resembles a mind.

Current workarounds reveal how broken the situation is. Some teams maintain external databases and force agents to query them constantly. Others use elaborate prompt engineering to restate history in every interaction. Both approaches scale poorly and introduce latency and error at every step. They treat symptoms rather than the architectural disease.

Technical Paths Forward

The solution isn’t simply larger models or longer contexts. We need new primitives for persistent state that integrate deeply with reasoning engines. Vector databases with better temporal awareness. Graph structures that evolve with new evidence. Systems that don’t just store facts but maintain the provenance and confidence of every belief.

Some researchers are exploring stateful architectures where the model itself maintains compressed representations of its experiences. Others are looking at hybrid systems that combine neural networks with symbolic memory systems that can be queried and updated with precision.

The most promising direction may lie in treating memory as a first-class citizen rather than an afterthought. Design agents that have explicit memory management as part of their core loop — deciding what to remember, what to forget, how to compress, and when to retrieve. This mirrors how human cognition works: we don’t remember everything, but we remember what matters in the right context.

The agentic future depends on solving this. Until agents can maintain coherent understanding across time, they’ll remain impressive demonstrators rather than reliable collaborators. The memory crisis isn’t a bug in current implementations. It’s the next fundamental challenge that will separate toys from tools that reshape how we work.

The teams that crack persistent context won’t just ship better agents. They’ll define what intelligent systems look like for the next decade. The question isn’t whether we’ll solve it. It’s who will solve it first and whether we’ll build those solutions with the right principles of openness and user control from the start.

Write a comment